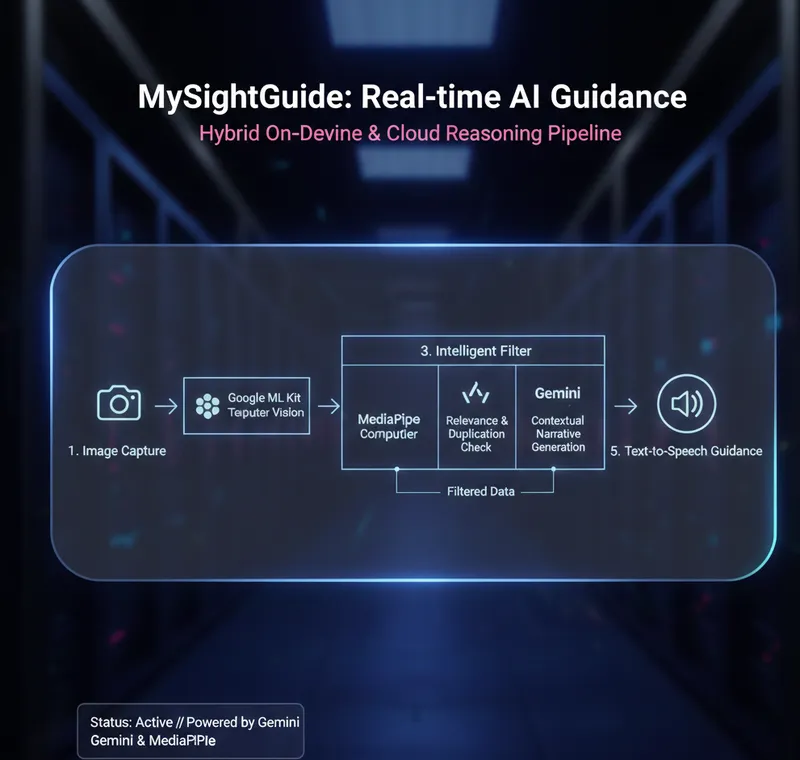

MySightGuide: Vision AI

Hybrid processing pipeline enabling visually impaired users to perceive environments through on-device Computer Vision and Gemini reasoning.

System Logic Flow

Inference Engine

Utilizing MediaPipe for real-time object detection. Optimized TFLite models for < 100ms latency.

Semantic Reasoning

Gemini API transforms raw data into human-centric, spatial descriptions for context-aware navigation.

SentriGrade: OCR Logic

Extraction Pipeline

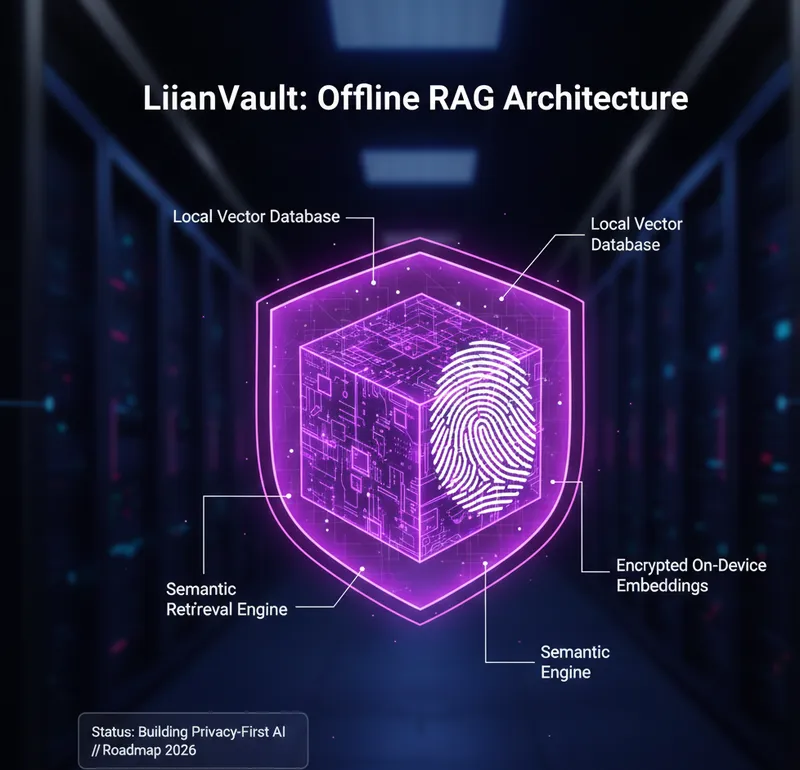

LilianVault: Offline RAG

On-Device Knowledge Base

Gemini Live Multimodal

A high-concurrency architecture for **Native Android** that streams real-time video frames and audio buffers to the Gemini 2.0 Flash model via WebSockets for ultra-low latency spatial reasoning.

Multimodal Streaming Pipeline

01. CAPTURE

CameraX & AudioRecord pipe raw bytes into a MediaCodec encoder.

02. STREAM

Persistent Bidi-gRPC connection manages full-duplex communication.

03. SYNTHESIS

On-device AudioTrack plays back real-time low-latency AI speech.